Scaling Inference-Time Reasoning Will Enable Fully-Autonomous Mathematics Researchers

The first of a series of posts forecasting AI progress

A fully-autonomous mathematics researcher must do two things. It must solve open mathematics problems. And it must pose interesting mathematics problems within its reach to solve. I will argue that reasoning will scale to enable these two things.

Solving Open Math Problems

That reasoners will scale to solving interesting problems seems likely since such problems are verifiable (using autonomous proof checkers). Some disagree (here’s a relevant LessWrong post), taking the position that solving open problems is fundamentally different to solving closed problems. LLMs, they argue, have the latent ability to solve these problems because the knowledge is present in the data they’re trained on. And, they argue, the reinforcement learning process by which inference-time reasoners are trained simply elicits this latent ability—but since no such latent ability exists when it comes to solving novel problems, there’s a fundamental difference between the two, and we have no reason to suppose that reasoners will scale to solving novel problems any time soon.

But this is just a matter of how one chooses to carve out nature. Just as the techniques required to solve closed problems are available in the corpora of human data on which LLMs are trained, so too are the more abstract techniques required to solve open problems. The main distinction between the two is not some fundamental difference in nature, but rather the time horizon over which each occurs. Applying the techniques required to solve closed problems takes minutes or hours. Applying the techniques required to solve open problems takes days or weeks (and perhaps up to, effectively, indefinitely longer).

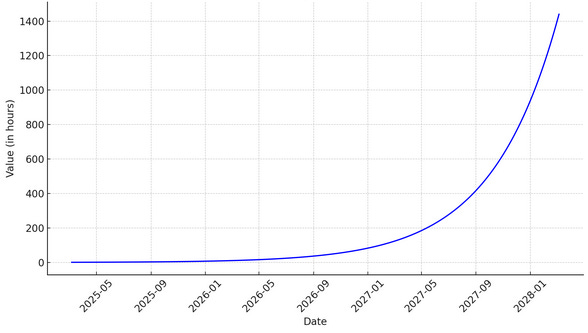

This chart describes which mathematics tasks AI can solve in terms of how long the task takes a skilled human to do. It’s based on extrapolative research by METR.

The fact that LLMs cannot yet apply the time-consuming techniques required to solve open problems is in fact largely uninformative given the time-horizon focused perspective which seems correct, and the informative evidence we do have (that the techniques which are within current-AI’s time horizons are successfully carried out by AI) suggests that we should expect that AI will be able to successfully carry out techniques which are within future-AI’s time horizons—assuming they’re not fundamentally different in kind.

Posing Interesting Problems

The ability to solve open questions is not sufficient for fully autonomous research. The reasoner must also be able to self-direct—to pose problems which are at once interesting and reasonably likely to be within the its reach to solve.

One might ask: “Can we not simply use the argument we just used to demonstrate that reasoners will scale to solve open questions, to demonstrate also that they will scale to be able to pose problems? Is this too not just a matter of time-horizons?”. Unfortunately, we cannot. The problems which reasoners have been able to solve so far are verifiable tasks. Solving open problems is also a verifiable task, so is not of a fundamentally different kind, which is why our argument above held. But we have yet to demonstrate that self-direction in research is a task which we will be able to make verifiable. So, because we have not established that posing problems does not belong to that class of tasks at which today’s reasoning models do not perform well, we have more work to do yet.

I’m going to break down what solving open problems will involve, and show that the techniques involved include a limited kind of self-direction in finding problems to solve, and that by transitive property this limited self-direction is a verifiable task. Then I will show that this limited self-direction can be used as a source of synthetic data on which models can be trained, enabling indefinite, fully self-directed research.

Solving open problems requires novel insight. Novel insight realistically requires trial-and-error: one comes up with an idea and sees if it goes anywhere. The AI is coming up with various potential novel ideas, and, assuming that LLMs of this scale are not fundamentally incapable of this kind of skill, should form a strong intuition about what kind of ideas tend to lead to successfully solving problems. Now, this may be a kind of self-direction, but it’s not self-directed problem-posing. So, we’re not where we want to be quite yet.

But most open problems are more difficult than this. One can’t just come up with a single idea that gets one straight to the solution. Rather, one has to make progress incrementally. Find some small property which one didn’t notice in one’s initial attempt to solve the problem. Play around with the implications. Try to solve the problem again, make some incremental progress. Find some more properties. Some more implications. Gradually, by selecting the right sub-problems to work on, one works toward solving the given problem. This process requires an understanding of how to find the kinds of problems are within one’s reach, and how to find problems which yield interesting implications. This is self-directed problem-posing, as an instrumental good to a verifiable task. So, by transitive property, this kind of self-directed problem-posing is a verifiable task.

But this is still just limited self-directed problem-posing. Yes, once the AI has a difficult problem given to it, it can do its best to pose problems the solutions to which will be instrumentally useful to solving the given problem. But it still requires a human-in-the-loop supplying the AI with its overall research direction.

But the problem-solving model’s reasoning traces are synthetic data which describe the process of finding problems to solve which are within the reasoner’s reach and which have useful implications. This synthetic data can be used to train a reasoner to continuously pose and solve interesting problems. The details of this are left as an exercise for the reader.

So, I have presented two arguments. One that reasoners will scale to solving open problems, and one that the reasoning traces can be used to train reasoners which autonomously pose novel problems. This kind of reasoner will be a fully-autonomous mathematics researcher.

I think the second argument is neat and convincing. -1 points for giving the reader an exercise 😠. But yeah basically for any harder verifiable task an implicit subcapability that will be verifiably trained is long term planning, bc you need to make plans and break down the problem into parts when the problem is hard. I would further guess that part of what makes current models not great at this is that the math environments with verifiable rewards are usually pretty short problems, and there’s possibly much more long term planning progress to be made. Then, the reasoning traces naturally learn to make long term plans, which in a way is what ‘asking the right questions’ means in math? Not entirely but it’s a big part of it. Idrk about the step after that, and clearly you don’t either…